Page role

Prices is the maintained cost snapshot.

Use this page for pricing logic, source links and cost warnings. Because AI model, API and GPU prices change often, treat this as a maintained snapshot and always verify the official pricing source before payment.

Pricing freshness update

Last checked: May 5, 2026

AI model prices, GPU cloud prices, discounts, cached-token pricing, batch discounts, regions and marketplace rates can change quickly. Use this page as a planning snapshot, then open the official source before buying hosting, renting GPU cloud or connecting a paid API key.

| Pricing source | What to verify | Beginner risk | Official link |

|---|---|---|---|

| OpenAI API pricing | Input, cached input, output, batch, realtime and tool/container pricing. | Medium | OpenAI pricing |

| Claude API pricing | Opus, Sonnet, Haiku, cache writes, cache hits, batch and model deprecations. | Medium | Claude pricing |

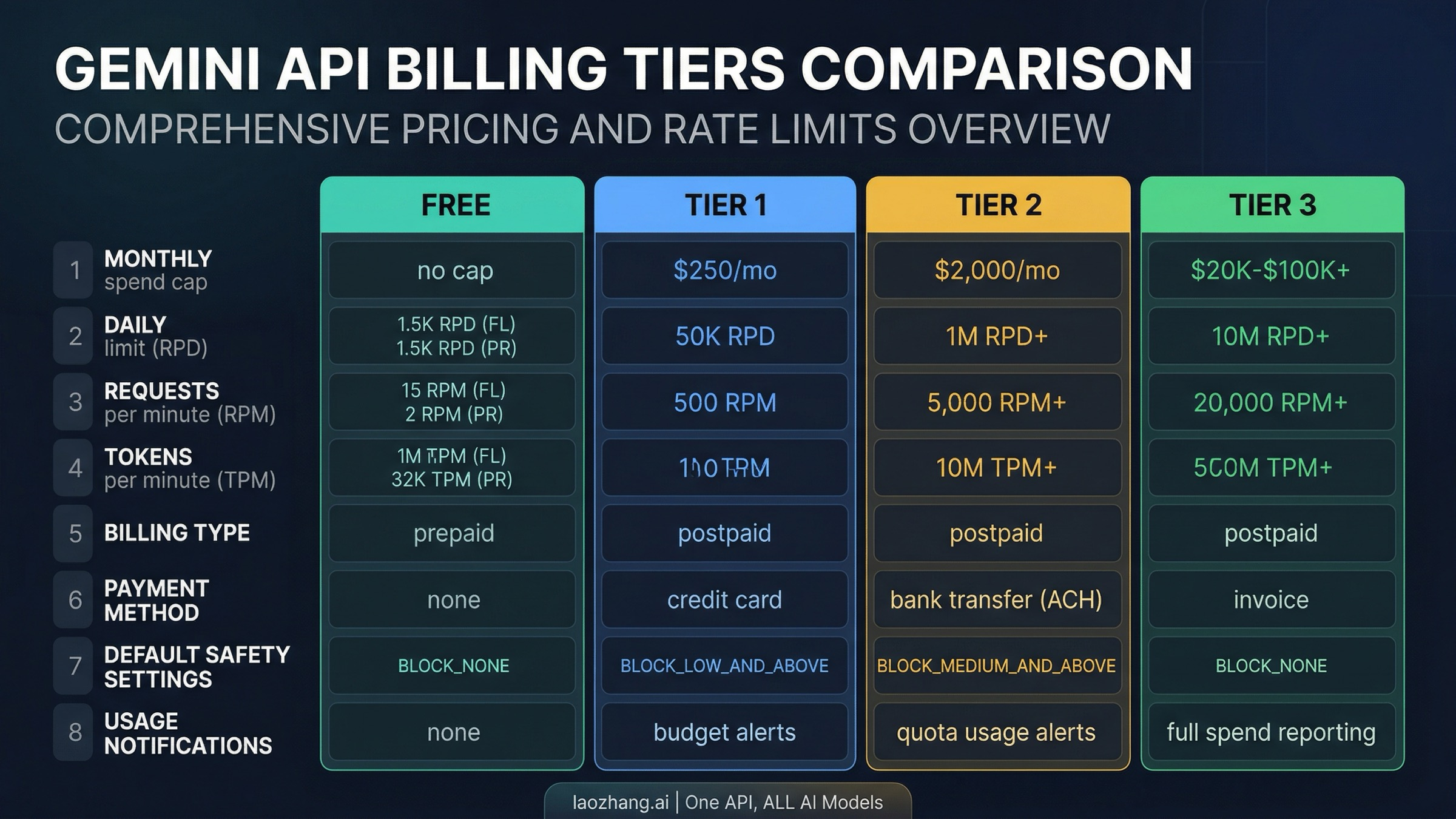

| Gemini API pricing | Free vs paid tier, batch discounts, context caching, model availability and production rate limits. | Medium | Gemini pricing |

| DeepSeek API pricing | V4 Flash, V4 Pro, cache-hit pricing, discount windows and balance deduction rules. | Medium / high | DeepSeek pricing |

| DigitalOcean GPU Droplets | RTX, L40S, H100, H200, MI300X, hourly rates, regions and 1x vs 8x configurations. | Medium | DigitalOcean GPU pricing |

| AWS Bedrock / AgentCore | Model tokens, agent runtime, memory, gateway, browser/code tools and modular usage units. | High | Bedrock pricing / AgentCore pricing |

GPUJet Prices — May 2026 clean snapshot

Cloud AI pricing, GPU costs and model API prices without outdated notes.

This page is a practical planning snapshot for AI builders. It covers model API prices, GPU cloud pricing logic, hosting choices, cost-control rules and official source links. Prices can change quickly, so always open the official pricing page before buying hosting, renting GPU cloud or connecting a paid API key.

Model API pricing

Use official pricing pages before estimating real usage.

Token price is only one part of AI cost. Cached input, batch jobs, tool calls, realtime audio, long context, retries, image generation, grounding and agent loops can change the final bill.

| Provider | Check this before use | Beginner risk | Official source |

|---|---|---|---|

| OpenAI API | Input, cached input, output, batch, realtime, audio, tools and container pricing. | Medium | OpenAI pricing |

| Claude API | Model tier, prompt cache writes, cache hits, batch pricing and platform feature pricing. | Medium | Claude pricing |

| Gemini API | Free tier, paid tier, context caching, batch pricing, image/audio pricing and grounding. | Medium | Gemini pricing |

| DeepSeek API | V4 Flash, V4 Pro, cache-hit price, cache-miss price, output price and current discount window. DeepSeek states the V4 Pro 75% discount is extended until 2026-05-31 15:59 UTC. | Medium / high | DeepSeek pricing |

| AWS Bedrock / AgentCore | Model tokens, agent runtime, memory, gateway, browser/code tools and modular usage units. | High | Bedrock pricing / AgentCore pricing |

GPU cloud pricing

Hourly GPU prices become expensive when left running.

GPU cloud is useful for local LLM tests, image model experiments, fine-tuning tests and workloads that need direct compute. For many beginner apps, API-first AI plus a small VPS is safer and cheaper.

| Provider | Use it for | What to verify | Pricing source |

|---|---|---|---|

| DigitalOcean GPU Droplets | Managed GPU droplets and clearer cloud setup. | GPU model, memory, region, hourly rate and multi-GPU configuration. | DigitalOcean GPU pricing |

| RunPod | GPU pods, serverless GPU and burst experiments. | Active time, storage, template behavior and whether the workload keeps billing while idle. | RunPod pricing |

| Vast.ai | Marketplace GPU hunting and low-cost experiments. | Host reliability, verification, region, storage, interruptible risk and current live price. | Vast.ai pricing |

Best choice by use case

Do not choose AI infrastructure by price alone.

Realistic cost scenarios

What a beginner AI project can actually cost

Exact prices change, but beginners need realistic ranges before they rent servers, connect APIs or test GPU cloud. These examples are reference scenarios, not fixed quotes.

Pricing reliability note

How to read GPUJet price tables

Pricing pages change faster than normal tutorials. Treat GPUJet pricing as a planning snapshot, then open the official source link before buying, renting or connecting a paid API key.

Useful follow-ups: AI API Cost Control, GPU Cloud Decision Guide, and Cloud Guide.

API cost control

Set billing limits before testing AI APIs

Before connecting a model API to a website, bot or AI agent, set usage limits and monitor billing. Small test prompts are cheap, but repeated requests, long context and forgotten automations can raise costs quickly.

Next cost steps

Turn prices into a real project budget.

After checking prices, connect them to your actual workload: one test run, one normal day, one bad day, the runtime choice and the agent risk level.

Cost Planning Checklist Infrastructure Hub Risk LevelsPricing maintenance policy

This page must be checked more often than normal tutorials.

Model APIs, GPU cloud regions, discounts, cached-token pricing, batch pricing and agent platform costs can change quickly. GPUJet treats this page as a planning snapshot, not a permanent price list.

GPU Cloud Burn Calculator

See what happens if a GPU keeps running.

GPU hourly prices can look small, but daily and monthly exposure adds up quickly. Use this before renting GPU cloud.

GPUJet warning: stop or destroy GPU instances when tests end.

AI Infrastructure Cost Calculator

Estimate API, VPS and GPU cloud cost before you build.

Enter rough numbers to estimate cost per run, daily API cost, monthly API cost, GPU cloud exposure and total monthly infrastructure cost. Always verify official pricing before payment.

Your usage assumptions

Formula: token cost = input tokens × input price / 1,000,000 + output tokens × output price / 1,000,000. Monthly estimate uses 30 days.

Estimated result

Recommendation: API-first plus a small VPS is likely enough for the first version.